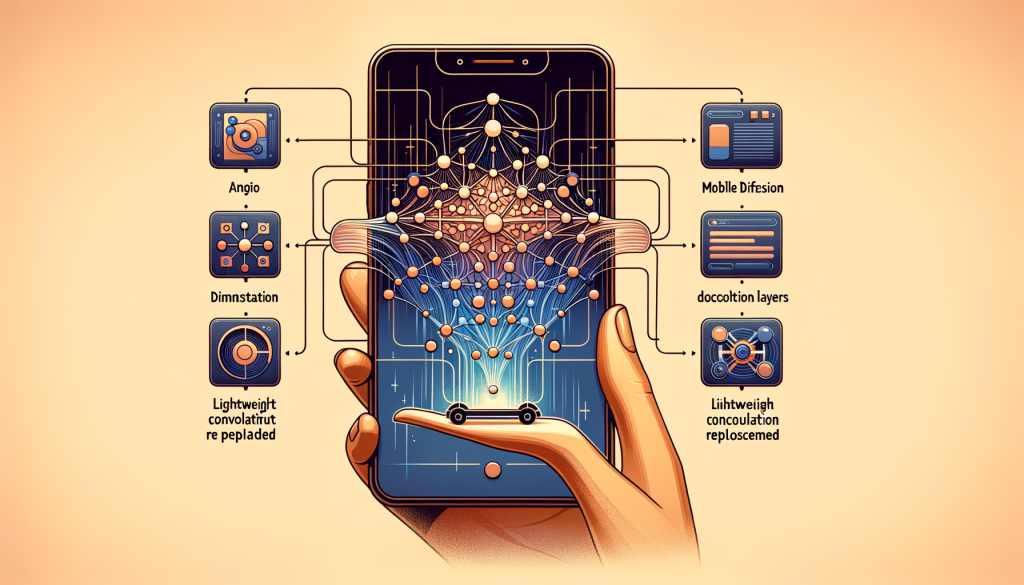

MobileDiffusion is a novel approach for rapid text-to-image generation on mobile devices. It is an efficient latent diffusion model specifically designed for mobile devices, with a small model size of 520M parameters. The relative inefficiency of text-to-image diffusion models on mobile devices arises from the iterative denoising process and the complexity of the network architecture. Previous studies have focused on reducing the number of function evaluations, but the architectural efficiency has received less attention. MobileDiffusion addresses this by examining each component within the model architecture and optimizing the UNet and image decoder. It incorporates more transformers in the middle and replaces regular convolution layers with lightweight separable convolution layers. The image decoder is also optimized using a variational autoencoder. MobileDiffusion achieves sub-second text-to-image generation on iOS and Android premium devices. It uses a DiffusionGAN hybrid for one-step sampling, which significantly streamlines the training process. The performance of MobileDiffusion is measured on both iOS and Android devices, and it proves to be efficient and capable of generating high-quality images quickly.